So this new build is really just a remix of the original NSFW build, and stuffing it into one of the new 48 slot Chenbro NR40700 listed in the forums via ebay. The only new parts are the case, a couple new SFF-8087 to SFF-8087 cables to replace the breakouts, and the 3d printed drive rails.

Moving from this (Supermicro 836):

to this (Chenbro NR40700):

Project started off when the new case arrived, I got it for $180 +$110 shipping but the same seller has had several auctions close at $169.99 + $110 shipping.

Plugged it in with a PSU loopback adapter just to make sure it powered on, and to see how loud it was. Louder than I expected, but due to the 120MM fans not the PSU as I was lead to believe. That’s actually good because I can control those.

Also had to print out tons of the drive adapters so I could move my existing drives over. I tried several different caddies but could not find any compatible so just went with the 3d printed ones.

Ended up printing them at 110% height as the default were a little loose. Even 3 across.

Once I had the drive rails on hand, it was time to disassemble old faithful.

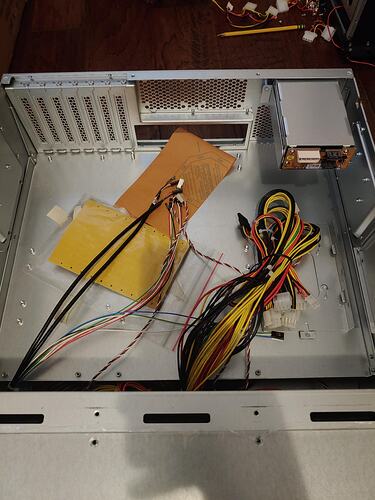

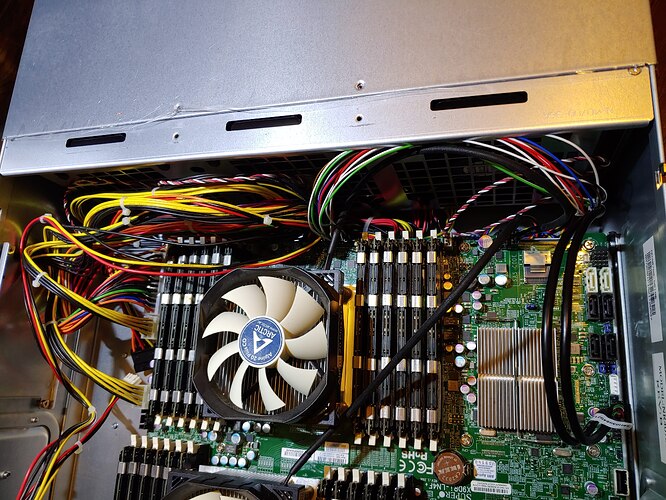

I forget how big this motherboard is until I have to work with it. Thing is massive. Here are the raw materials.

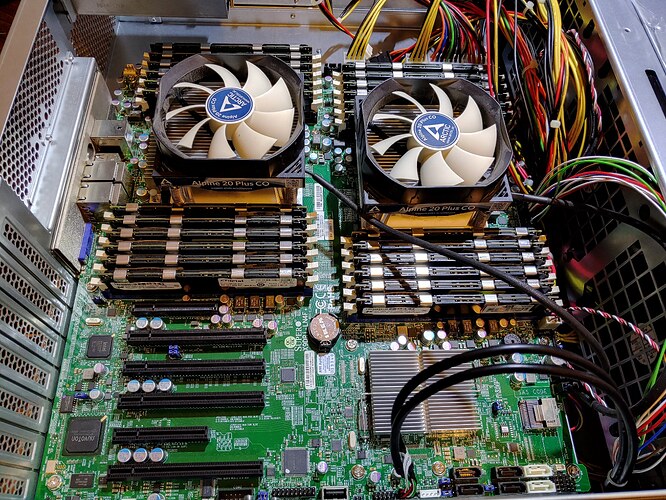

top left is a quadro K2000 used for my mac os VM, middle top is the 9201-e16 for the DS-2246 shelf, right 2 are 9211-8i cards, middle bottom is a pcie m2 adapter with optane drive. Bottom left is a quadro P400 for Plex VM. Eventually I will move the 2.5" drives over to the new case, but I ran out of desire and time so they are still external. (2.5" adapter trays keep failing at 90%)

I did put both of the 9211-8i cards in even though both were not needed. My thinking was this. Each card has 2 mini sas which are 4 channels of 6 Gbps. So thats 24 Gbps per port or 48 Gbps per card. These are pcie 8x cards which is limited to 20 Gbps. So my thinking was that by using only half of each card I am reducing the chance of the pcie port being the bottleneck. Now I know that spinners don’t use even a fraction of that, but I also know that I have the cards and the ports already. If I were buying cards I most likely would use 1 card. I also know that as SSD’s reduce in price a higher percentage of my storage will be ssd based. I plan on losing the 9201-16e soon which will free up a slot, and if for some reason I need another I can combine then.

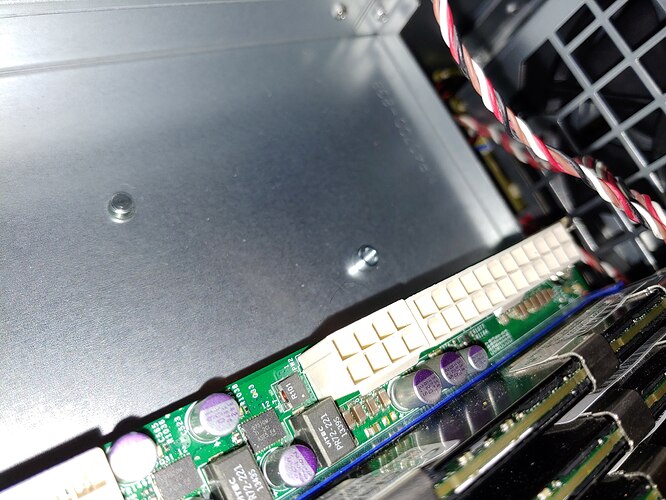

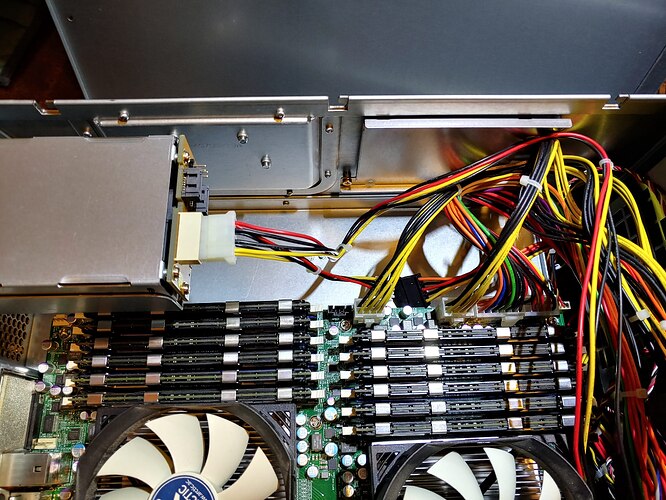

I didn’t test outside the case since I was working with all known good parts, but I did spend considerable time on the standoffs. The Chenbro case doesn’t have removable standoffs (first time I have observed that) so after a good 10 minutes trying to remove a couple, I realized that they have half height standoffs. So you only add the other half to the ones you want. Once that was out of the way, the standoffs were only available for the bottom 7 spots on the board. The top 5 I was able to just attach to the motherboard and use more like legs, as the standoff + threads was the same height as the whole standoff and keeps the board safely above the possible shorts. It was a tight fit and required several in and out tests, which were not easy.

Half standoff thats non removable.

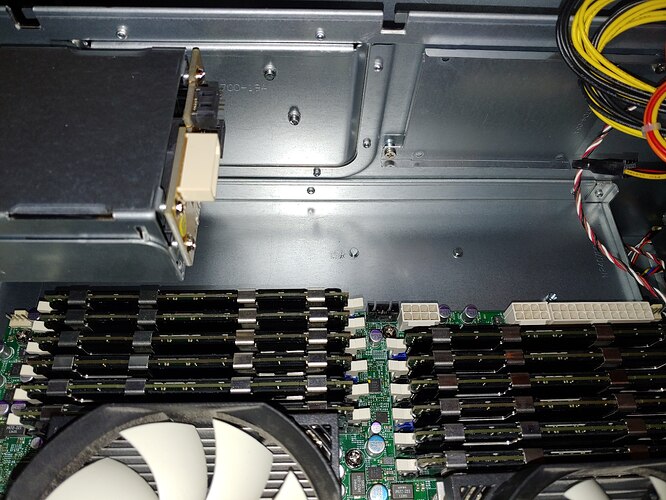

3.5" drive tray I had to remove for the motherboard to fit.

Fully populated with the cards. Prior to cable cleanup.

Next step was then to check to make sure everything was working.

Let me tell you that lifting this monstrosity on to the top of my rack was a chore. I am sure I will feel it tomorrow. But it was a success everything was where it should be and ESXI booted. The VMs were in maintenance mode since I didn’t have the storage in yet.

Drives added, all slots on the back planes are working and all vm’s are back online. I did eventually get it racked and will have to add a picture for that, but I will say that the rails from chenbro are some of the worst I have ever worked with. They are not smooth and there is very little forgiveness when sliding the case in the first time. I had to get my 12 year old daughter to help rack it, because I couldn’t guide them and hold the weight of the case.

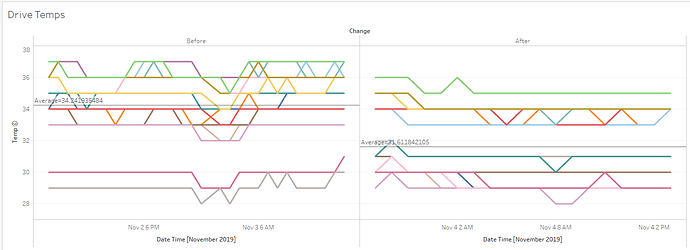

Now that everything is up and running it is quieter than my Supermicro 836 it replaced, and the drives are running cooler. That may change once they are fully populated, but for now it was worth it. The sound levels will drop further once I get the 8 ssd’s in that back row and I can shut down the ds-2246 shelf.